AI-Native Accelerators and the Partner Delivery Model

The SI delivery model has not changed in fifteen years, and it is starting to show

If you have run a modernization practice inside a system integrator at any point in the last decade, you know the shape of the engagement model by heart. The pursuit phase produces a fixed-fee assessment proposal, usually scoped between eight and sixteen weeks. The assessment phase deploys a senior architect, two or three mid-level architects, and a small army of analysts to produce a current-state document, a target-state recommendation, and a high-level roadmap. The delivery phase is then sold separately, usually under a different commercial model, often by a different account team, and frequently against a baseline that the assessment phase has already started to drift from.

The economics of this model have worked because the alternatives were worse. Internal architects do not exist at the scale enterprise modernization programs require. Off-the-shelf tools handle pieces of the assessment but not the synthesis. Hyperscaler partner programs (AWS MAP, MAP for Windows, Azure modernization funds) reward exactly this kind of structured assessment work, which means the SI is paid by the customer and partially funded by the partner for delivering the same artifact. The model still works. What is happening is that it is becoming uncompetitive against AI-native delivery, and partners who do not adapt will lose share to ones who do.

The shift is not subtle. An AI-native modernization practice produces the assessment artifact in two to four weeks instead of three to six months, with consistency that does not depend on which architects happened to be on the engagement. The delivery margin profile changes, the partner funding cycle compresses, and the volume of engagements a practice can run in parallel goes up by a factor that materially changes the economics of the practice. This article is about what that shift looks like in practice, and how a partner-led modernization business should be thinking about it.

What the partner economics actually look like under MAP and MAP for Windows

The AWS Migration Acceleration Program and its Windows-specific variant are the two highest-volume modernization funding mechanisms in the AWS partner ecosystem. The funding model rewards three things: a credible migration plan, a defensible business case, and demonstrable progress against the plan once delivery begins. The same is true on the Azure side, where the Azure Migrate and Modernize program funds a similar set of artifacts under a similar set of conditions. GCP's modernization programs follow the same structural logic.

The partner economics under these programs are straightforward. A faster, more consistent assessment artifact unlocks partner funding faster, which means the SI is carrying less working capital against the engagement. A more defensible business case shortens the customer's internal approval cycle, which means delivery begins sooner and the practice utilization curve looks better. A roadmap that the customer's architects review and accept without major revision means the delivery phase begins on a baseline that does not need to be renegotiated.

The platform-side numbers that drive this are the ones a partner practice should care about. Assessment time compression of 40 to 60 percent. A reduction in production incidents during subsequent migration of roughly 70 percent, which is what makes the second engagement easier to sell. Infrastructure cost reductions averaging around 30 percent post-migration, which is the number the customer cites when the SI asks for a reference. These are not platform marketing numbers in the abstract. They are the numbers that decide whether the customer renews the engagement and whether the partner program will fund the next one.

The capacity multiplier nobody is talking about

The conversation about AI in SI delivery has so far focused on individual productivity. Architects produce more output per week. Analysts move faster through the assessment workflow. The story is true but it understates what is actually happening, which is that the unit of capacity in a modernization practice is shifting from the architect-week to the platform-deployment.

Under the old model, a practice could run as many parallel modernization engagements as it had senior architects to staff them. Senior architects are the binding constraint. They are expensive, they are scarce, and they are not interchangeable, which is why most SI practices cap their concurrent engagement count at whatever number their senior bench will support without burning out.

Under the AI-native model, the platform performs the analytical heavy lifting, and the senior architect's role shifts toward review, contextualization, and customer-facing decision support. One senior architect can credibly oversee three to four concurrent engagements rather than one to two. The practice's concurrent engagement capacity goes up accordingly, and the bench composition shifts toward fewer senior generalists and more specialists who go deep on a specific industry or target stack.

For a partner practice that has been waiting for senior architect hiring to catch up to the pipeline, this is the change that matters most. The bench constraint relaxes. The pipeline-to-revenue ratio improves. The practice can chase opportunities it would previously have had to decline.

What changes in the pursuit motion

A pursuit team that walks into a customer conversation with an AI-native delivery model has a different conversation than one walking in with the traditional model. The assessment timeline they can credibly commit to is weeks rather than months. The fixed-fee number they can defend is materially lower because the architect-hours behind it are lower. The reference customers they can cite have faster time-to-value stories. The partner funding they can attach to the engagement is closer to the deal because the partner side knows the assessment artifact will hold up.

The competitive dynamic this creates is worth naming. SI competitors who are still pricing the assessment phase against eight to sixteen weeks of architect time will lose pursuits where the customer has already had an AI-native conversation with a different partner. They will lose them on price and they will lose them on credibility, because customers who have seen the platform-generated artifact understand what is now possible and will not accept the older delivery model in a competitive evaluation.

The right response, for any SI practice that has not yet adopted this model, is to adopt the platform and rebuild the pursuit narrative around what it changes, rather than discounting the traditional engagement to match. Customers do not want a cheaper version of the old engagement. They want the new engagement.

The Deployment model that makes this practical

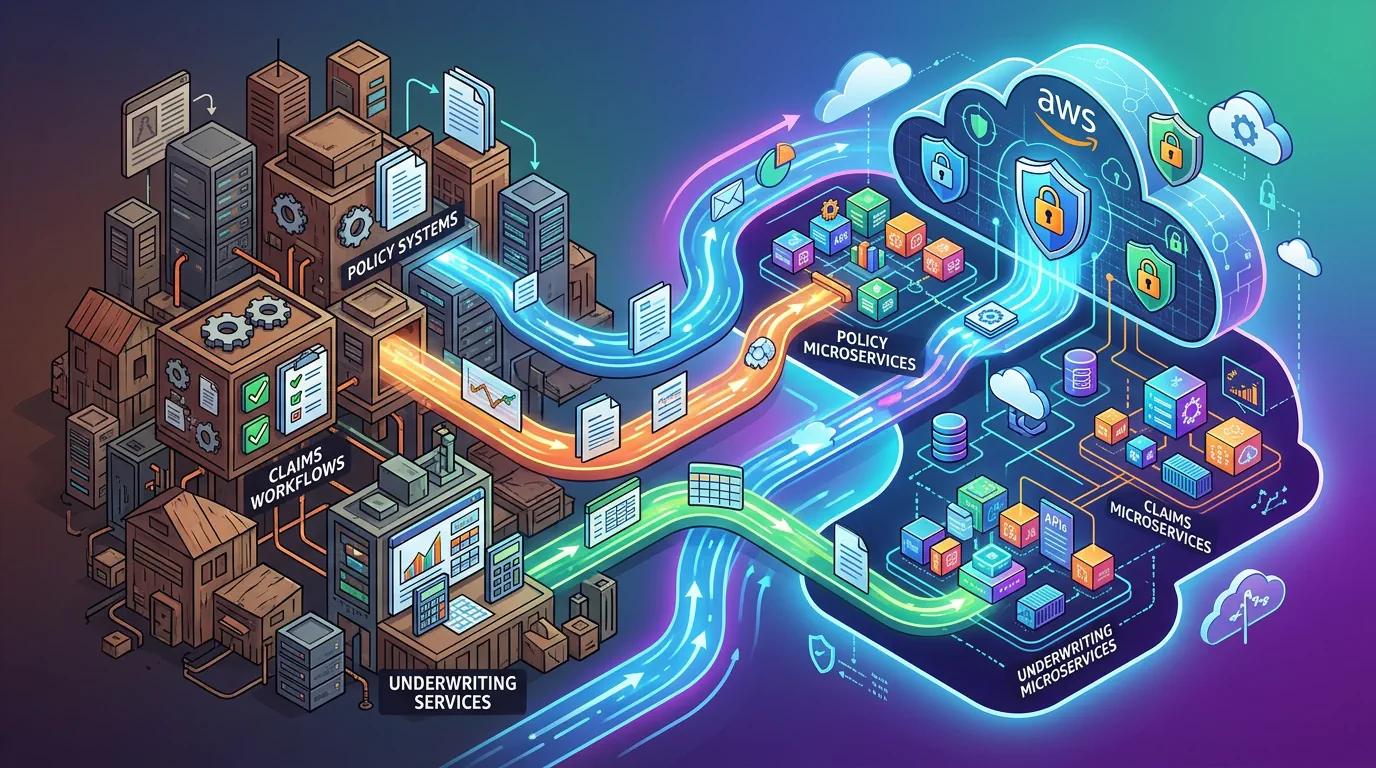

One concern that comes up consistently in partner conversations is whether an AI-native modernization platform creates data sovereignty or compliance friction inside customer environments. The honest answer is that it can, depending on how the platform is built. A SaaS platform that requires customer codebase to be uploaded to a vendor cloud is a non-starter for any regulated customer, and it is increasingly a non-starter for non-regulated customers as well as data residency expectations have hardened.

ArchWeaver's deployment model addresses this directly. The platform deploys as containerized microservices inside the customer network. The footprint is modest enough to fit inside almost any enterprise infrastructure: 8 cores, 16 GB of RAM, 100 GB of storage. No data leaves the customer perimeter. The platform is ISO certified and SOC 2 Type 2 compliant. For an SI practice that delivers into financial services, healthcare, government, or any cross-border regulated environment, this is the deployment model that allows the platform to be used without an extended security review on every engagement.

For partner practices, the operational benefit of this is that the platform can be deployed once into a customer environment and used across multiple engagements with that customer over time. The amortization of the deployment effort across engagements changes the unit economics of repeat work, which is exactly the kind of customer relationship that high-margin partner practices are built on.

How a partner practice should think about adoption

The honest framing for any SI leader evaluating this is that AI-native modernization is not optional, and the question is not whether to adopt it but how quickly. Customer expectations have already shifted. Hyperscaler partner programs are starting to favor partners who can demonstrate AI-native delivery. The competitive pressure from practices that have already adopted is real and is accelerating.

A practical adoption sequence works in three phases. Stand up a small reference engagement with a friendly customer where the platform-led methodology can be proven in a controlled setting. Build the pursuit and delivery playbooks around what that reference engagement teaches you, including the partner funding motion and the customer-facing pricing model. Then scale the practice's bench composition to match the new model, which usually means hiring fewer senior generalists and more industry-specific specialists.

The practices that move first will set the customer expectations the rest of the market has to meet. The practices that wait will spend the next two years explaining to customers why their delivery model takes longer than the competition. That is not a position any partner practice wants to be in, and it is the position that the current delivery model is heading toward.

The partner economics of modernization are about to change. The practices that lean into the change will own the next cycle.